This is a long one ..... so please bear with me

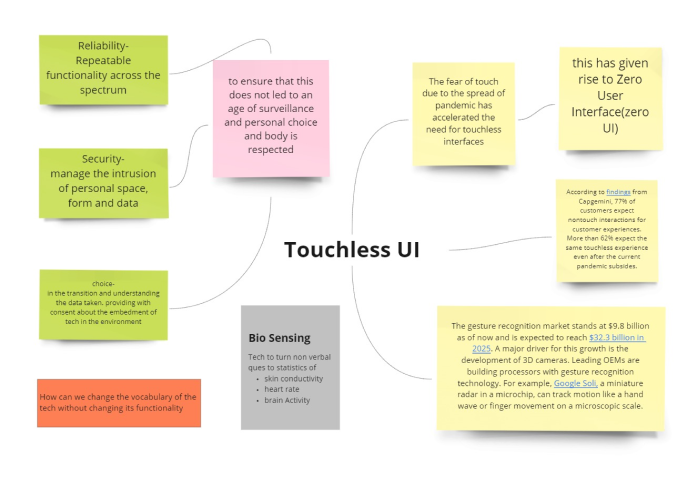

As a human-centered designer, the next few years are going to see a dynamic shift in interface design. Amplified by the pandemic, touchless and embodied interactions are going to disrupt customers as well as personal experiences. With gesture-based interaction as one of the frontiers in this new model of interaction, I wanted to explore the possibility of developing one holistic interaction model that would integrate the use of Natural User Interaction as the primary mode of communication. to create an interaction system that is truly seamless and intuitive and adaptive to the user. the purpose of incorporating such a model in HCI would shift the focus from having humans adapt to the language of technology to having technology adapt and simulate human behaviors.

Developing a vocabulary for instructional and navigational interactions within XR environments

To analyse more uniform and universal interaction systems

"You are part of the design team, before your presentation, you need to fix a part of the chair design before presenting its hologram to your clients. You put on your glasses, activate (switch on) the device, and view the chair design. You view it from different perspectives, Scale it up and down and increase and decrease its size (example from 150 cm to 300 cm). Once happy with the final design, you initiate (start) the process of rendering the chair. Midway through the render, you realize that you need to make another change so you terminate(stop) the process. After making the change, you initiate the process again and let it complete. Happy with your design, you deactivate(switch off) your device, take it off, and head to work."

55% of the participants drew their influences from interaction models that they had witnessed in movies. Out of that 45% drew their inspiration from one specific character and franchise- Iron Man (Tony Stark)

For many of the tasks, most participants instinctively used voice commands to initiate the process, when deprived of that option, then they used gestures to along with an imaginary UI interface to perform the respective tasks

Interestingly enough, a lot of participants relied on gaze based navigation and wink/blink based interactions to control the mixed reality space, especially in crowded spaces.

Frequent interaction with digital devices has conditioned the current and upcoming generations to perform specific gestures that, while not originally organic, have become deeply ingrained in muscle memory. This evolution is embedding digital gestures into what is now perceived as ‘natural’ interaction, blurring the boundary between physical and screen-based experiences.